Designing Trust in AI-Driven Decision Workflows

Redesigned an engineer-built AI proof-of-concept into a structured, auditable MVP for processing high-volume legal court orders — balancing automation with human oversight in a high-compliance financial services context.

Guidehouse: AI Studio – Financial Services · 2025

Overview

From AI prototype to auditable decision system

An AI-driven workflow designed to process legally sensitive court orders using extraction, validation, and structured human approval. This project evolved from an early AI prototype into a configurable decision system — and this case study walks through the key design decisions behind that transformation.

The Problem

Banks receive high volumes of garnishments, subpoenas, and levies. Each document requires validation, customer lookup, and a compliant action — even in “No Customer Found” cases. The process was largely manual, slow, and high risk. The real problem wasn't automation. It was decision clarity in a high-stakes workflow.

How I Approached It

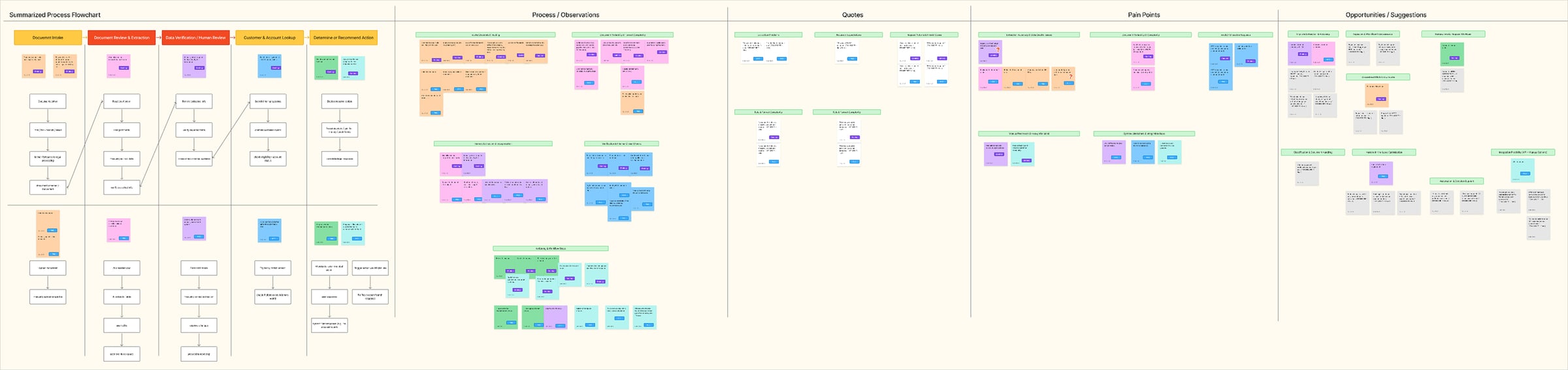

Research & Discovery

We were taking over an AI POC built by two engineers — no formal UX discovery, no written requirements, no direct access to banking users. I ran structured conversations with stakeholders, legal SMEs, and financial services representatives. I mapped the current banking workflow and compared it against what the POC actually supported. The core insight: the friction wasn't extraction. It was decision clarity — when to trust AI, when to override, and who owns the final action.

Affinity Synthesis

I created journey maps for both realities — current state and POC — then grouped observations into an affinity map across process gaps, quotes, pain points, and opportunities. That's when the end-to-end decision flow became clear.

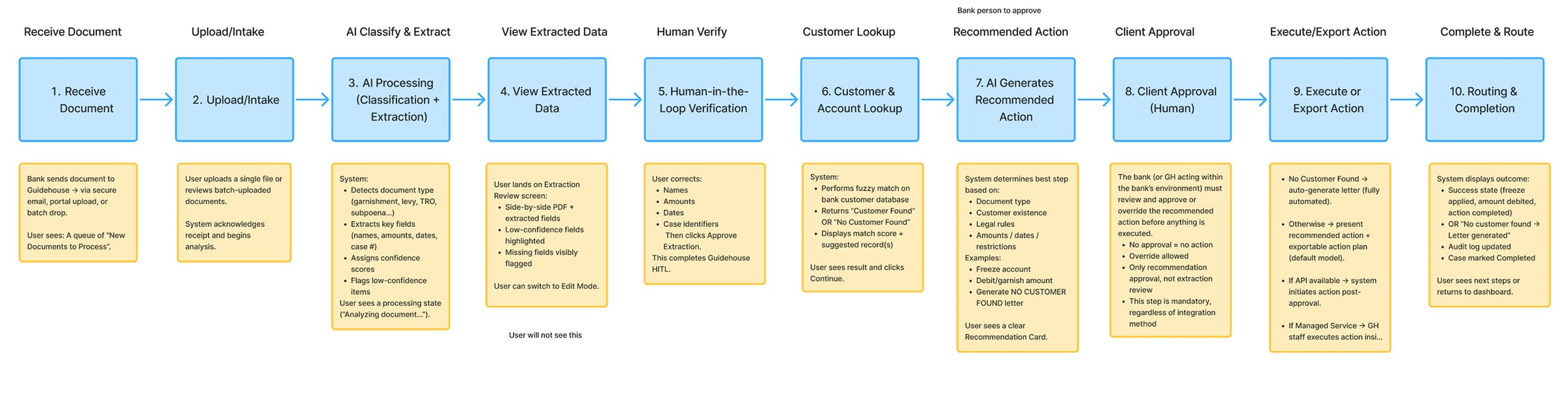

Workflow Modeling

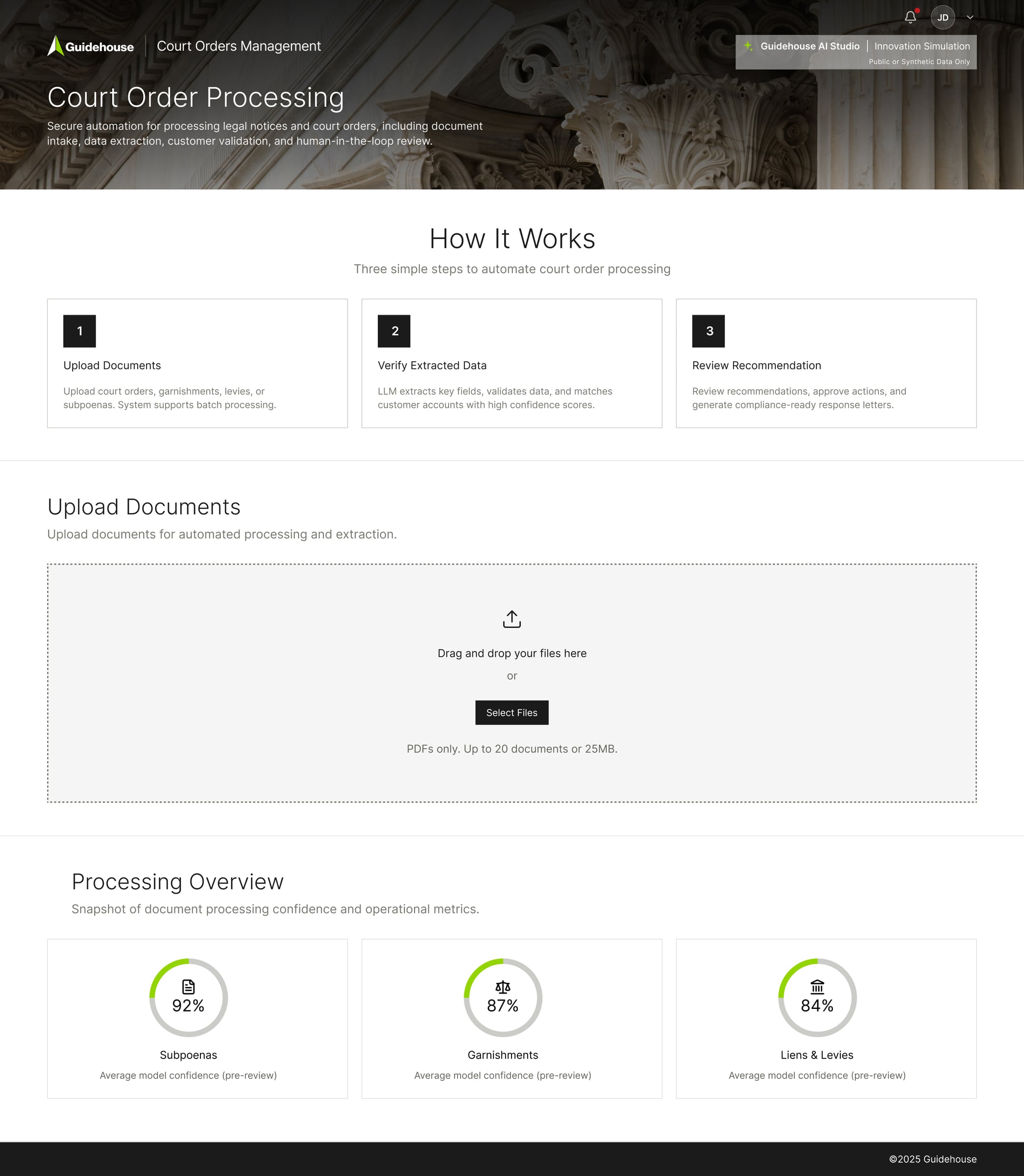

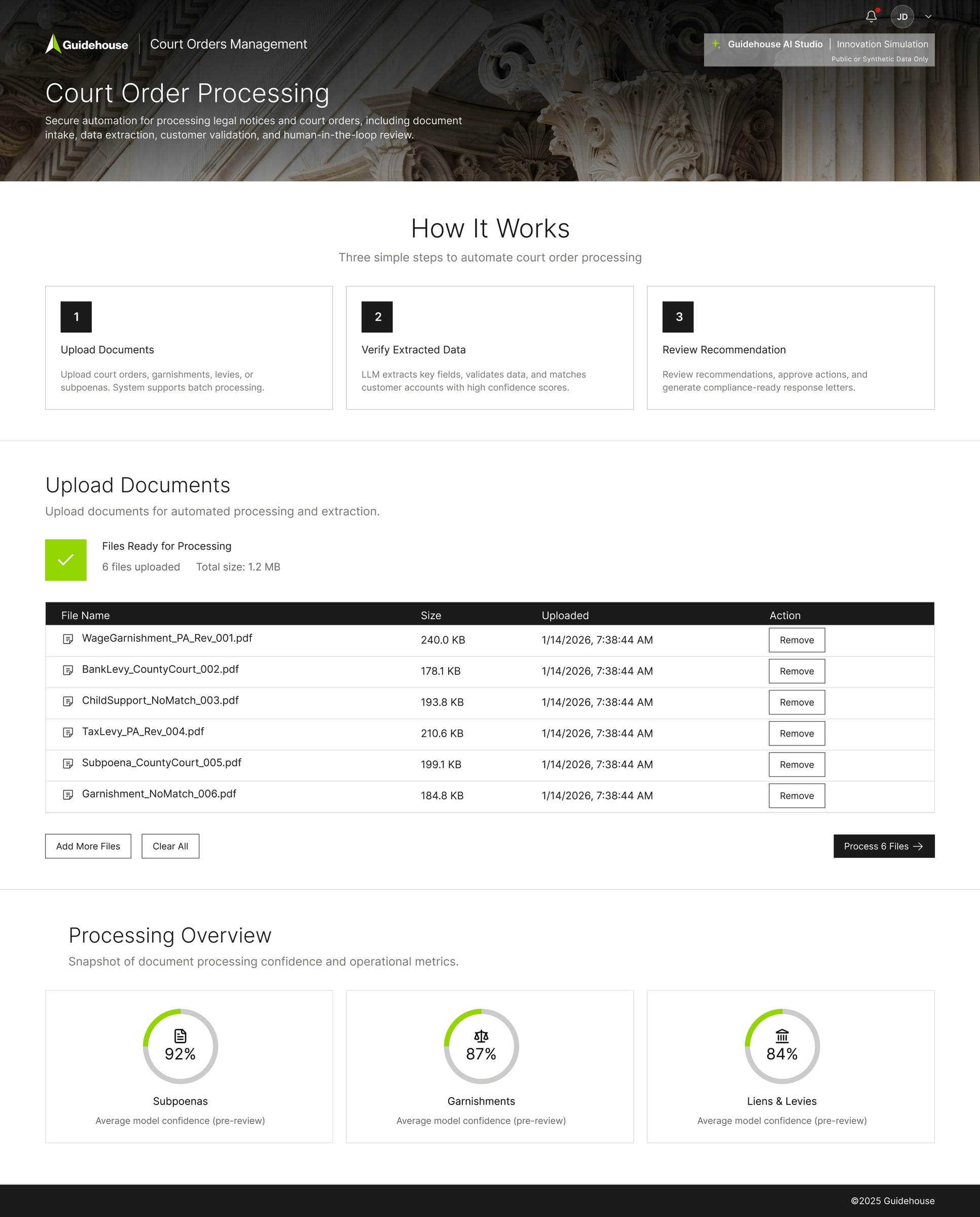

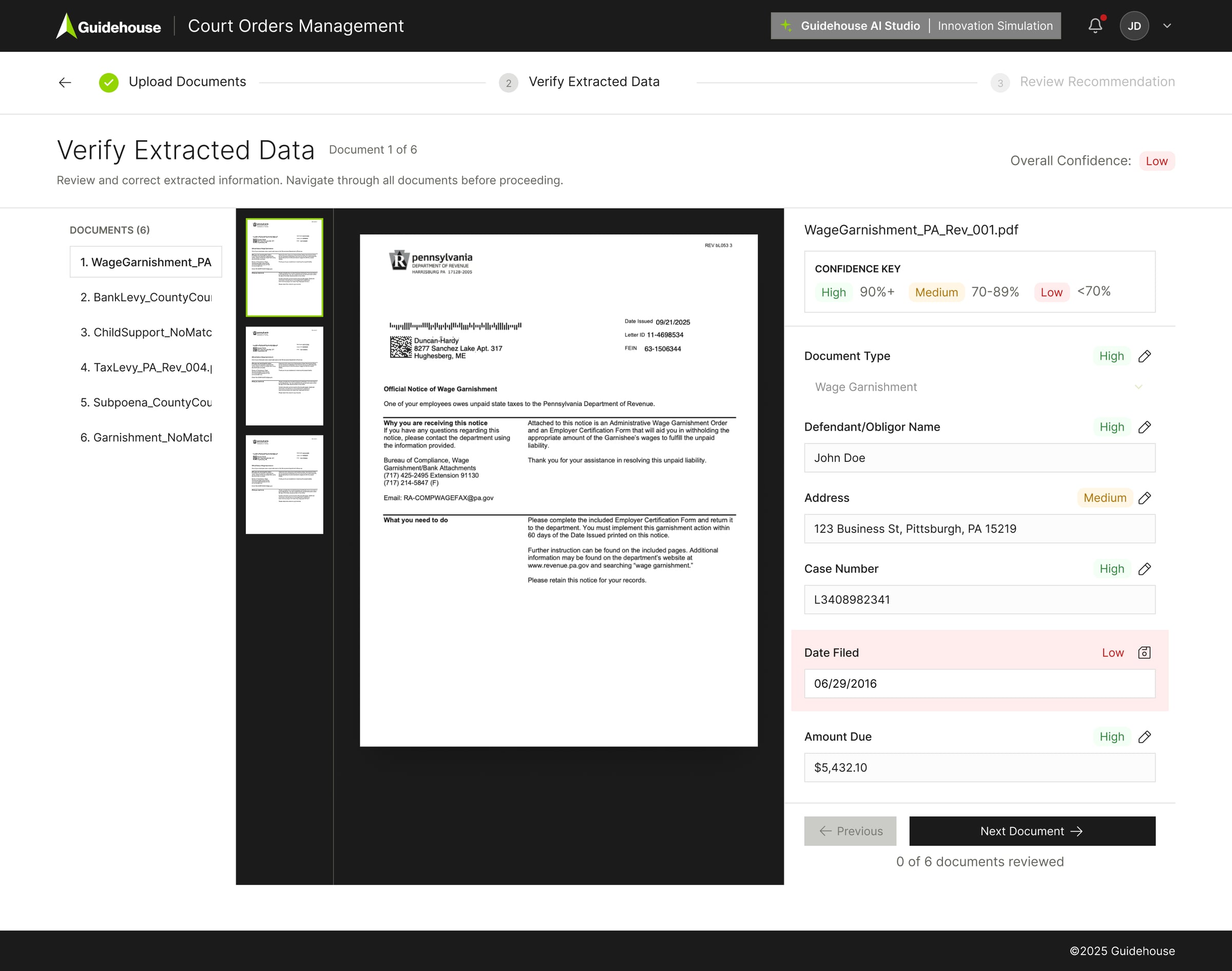

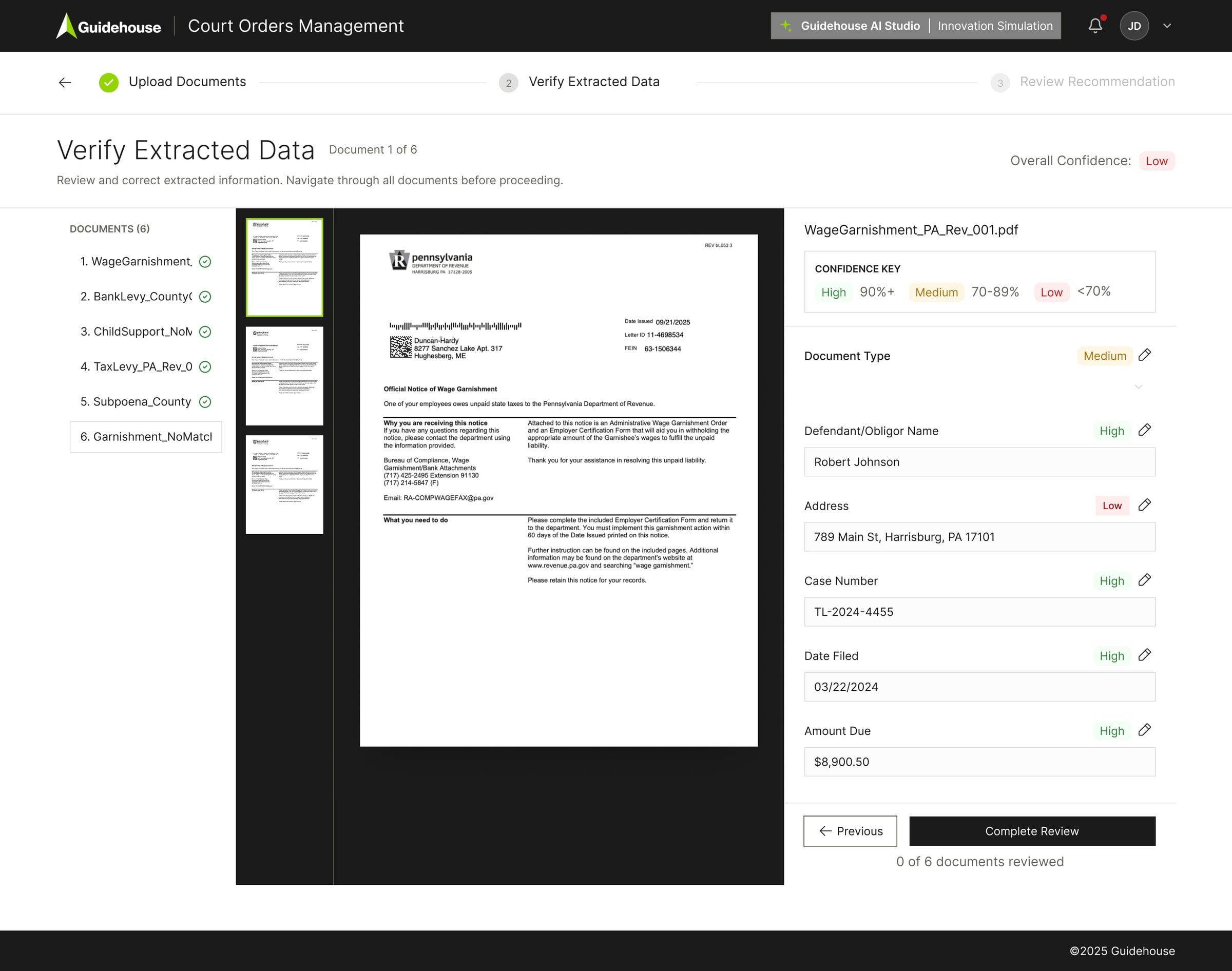

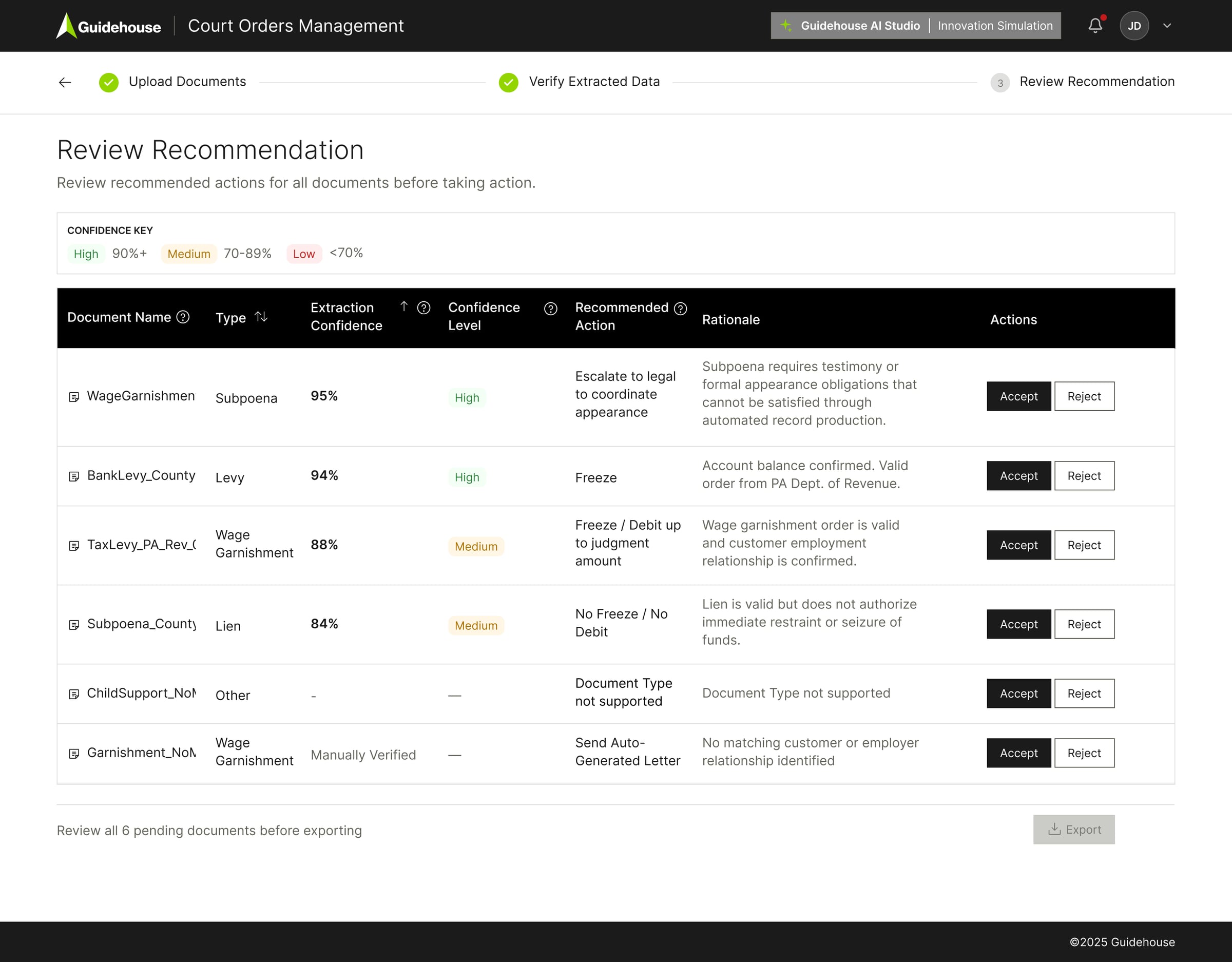

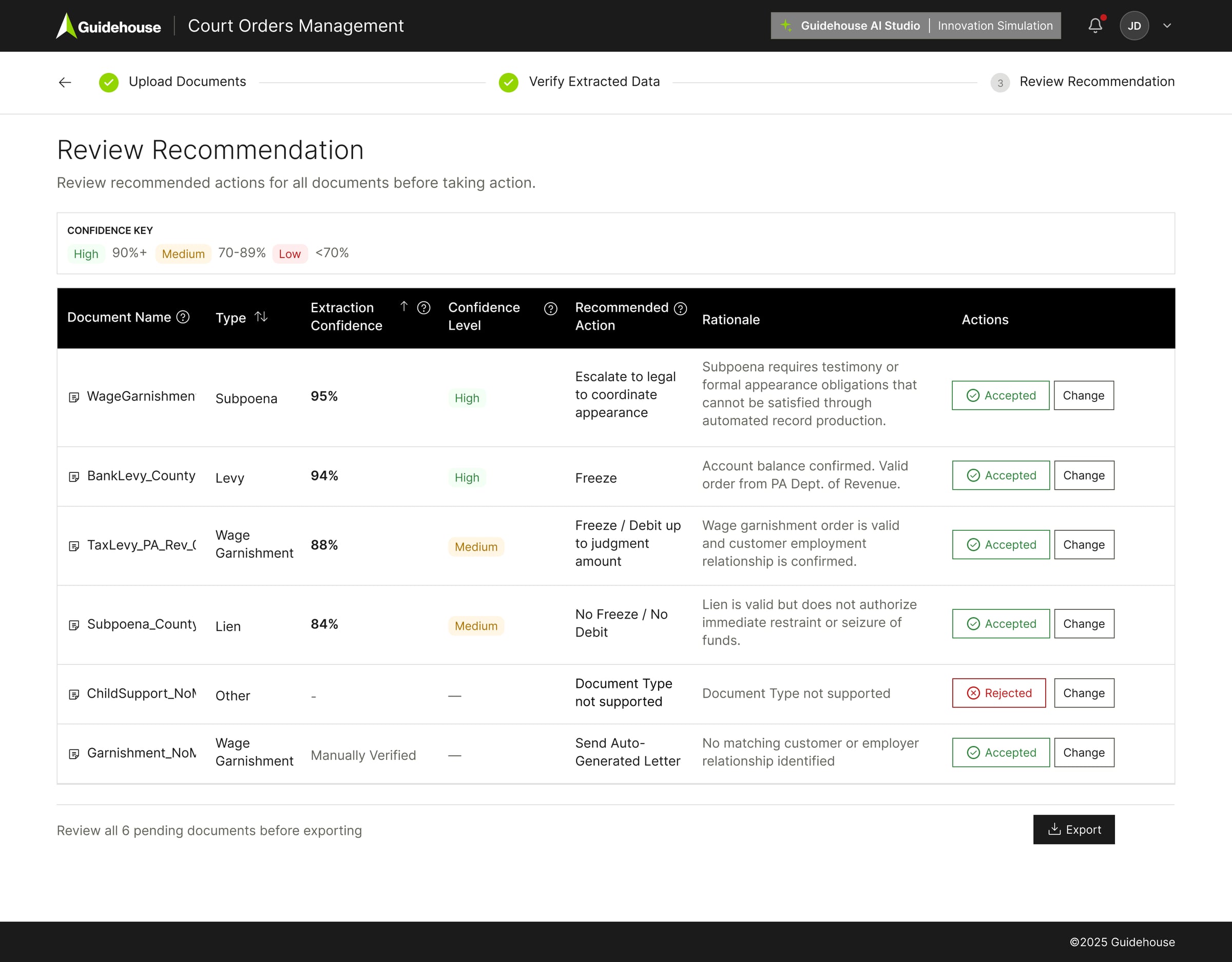

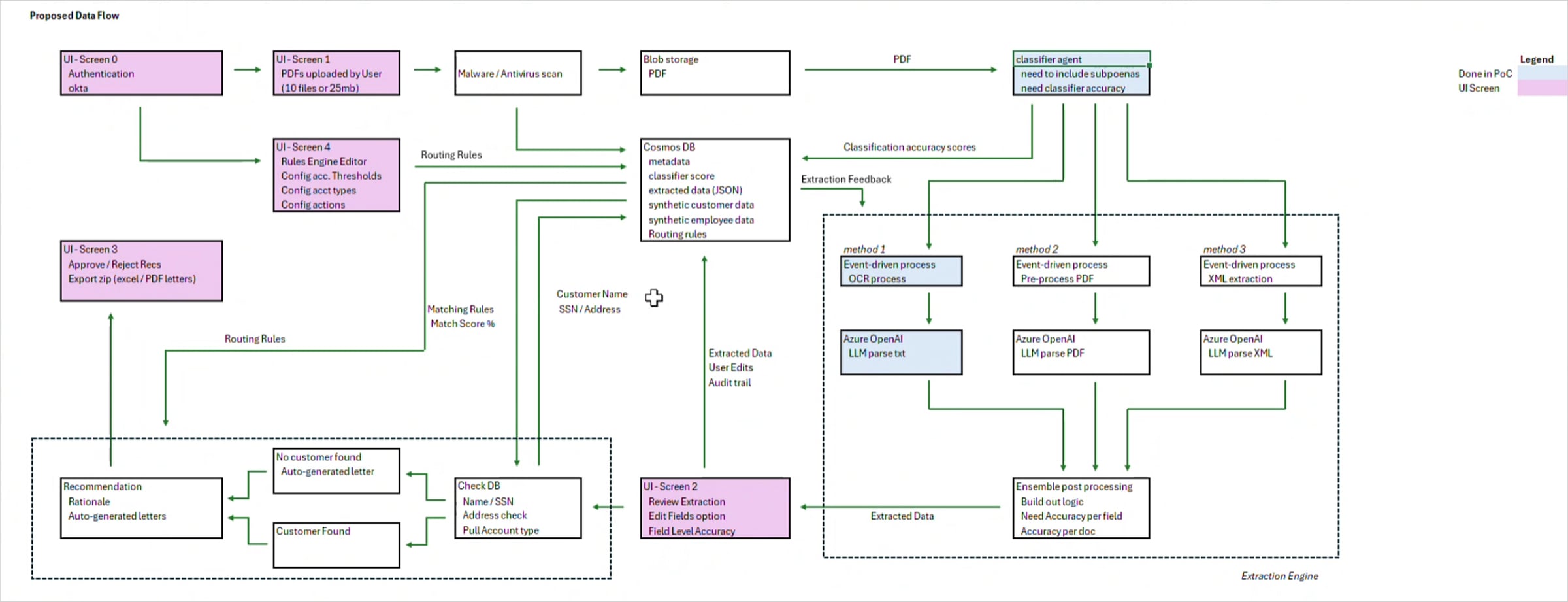

We iterated through multiple workflow versions before aligning on the MVP structure — around the sixth major revision. We modeled decision paths, state transitions, edge cases, and override flows to remove ambiguity before development. Many backend steps were consolidated into three clear UI stages: Upload, Verify, Review. This is where the system matured from prototype logic to product-grade workflow.

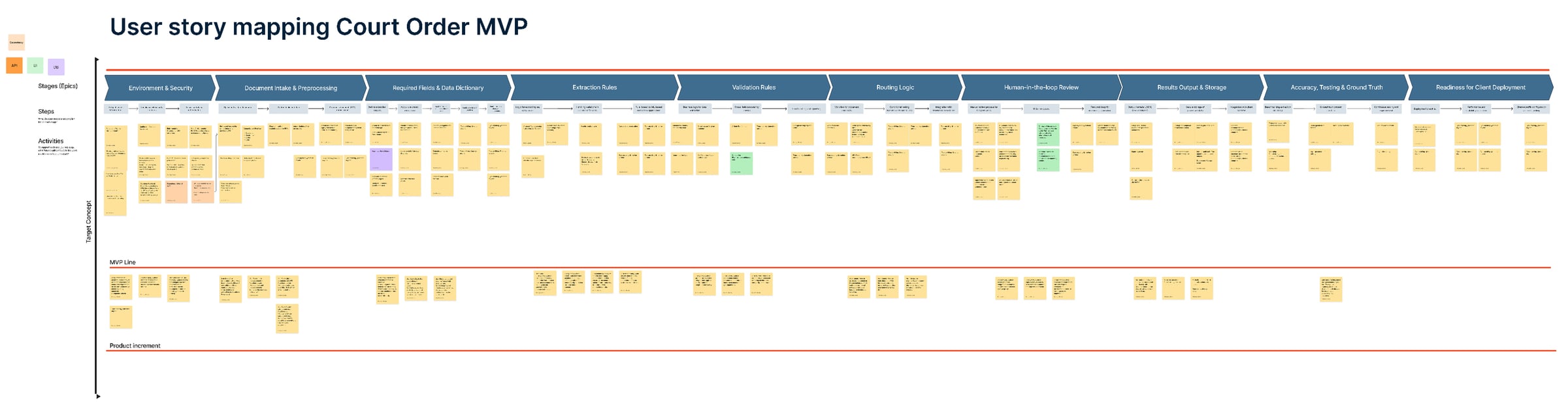

Product Shaping & Story Mapping

That didn't happen accidentally — it required product shaping, not just interface design. I was embedded directly in sprint cycles with product and engineering. Through story mapping sessions, we translated backend logic into explicit decision states. UX influenced routing rules, approval flows, and confidence handling. When confidence thresholds changed, UI states changed. My role was shaping how the system made decisions.

Key Product Decisions & Iterations

We tested adding an internal operations layer mid-workflow, then removed it to reduce complexity. We also designed an open Edit Mode — usable, but it introduced liability risk from a governance perspective. We removed it and redesigned a controlled, auditable version after SME discussions. These were product-level tradeoffs, not just UI refinements.

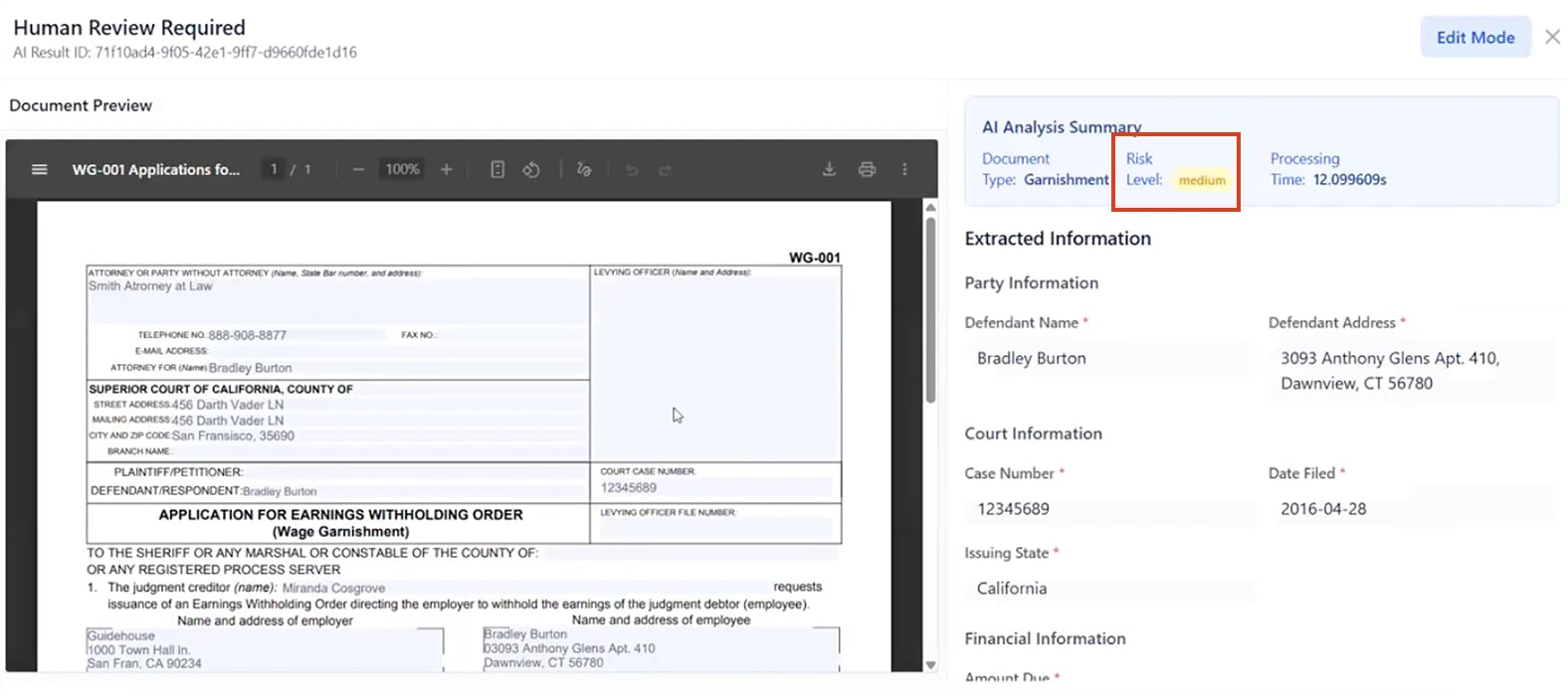

Edit Mode enabled

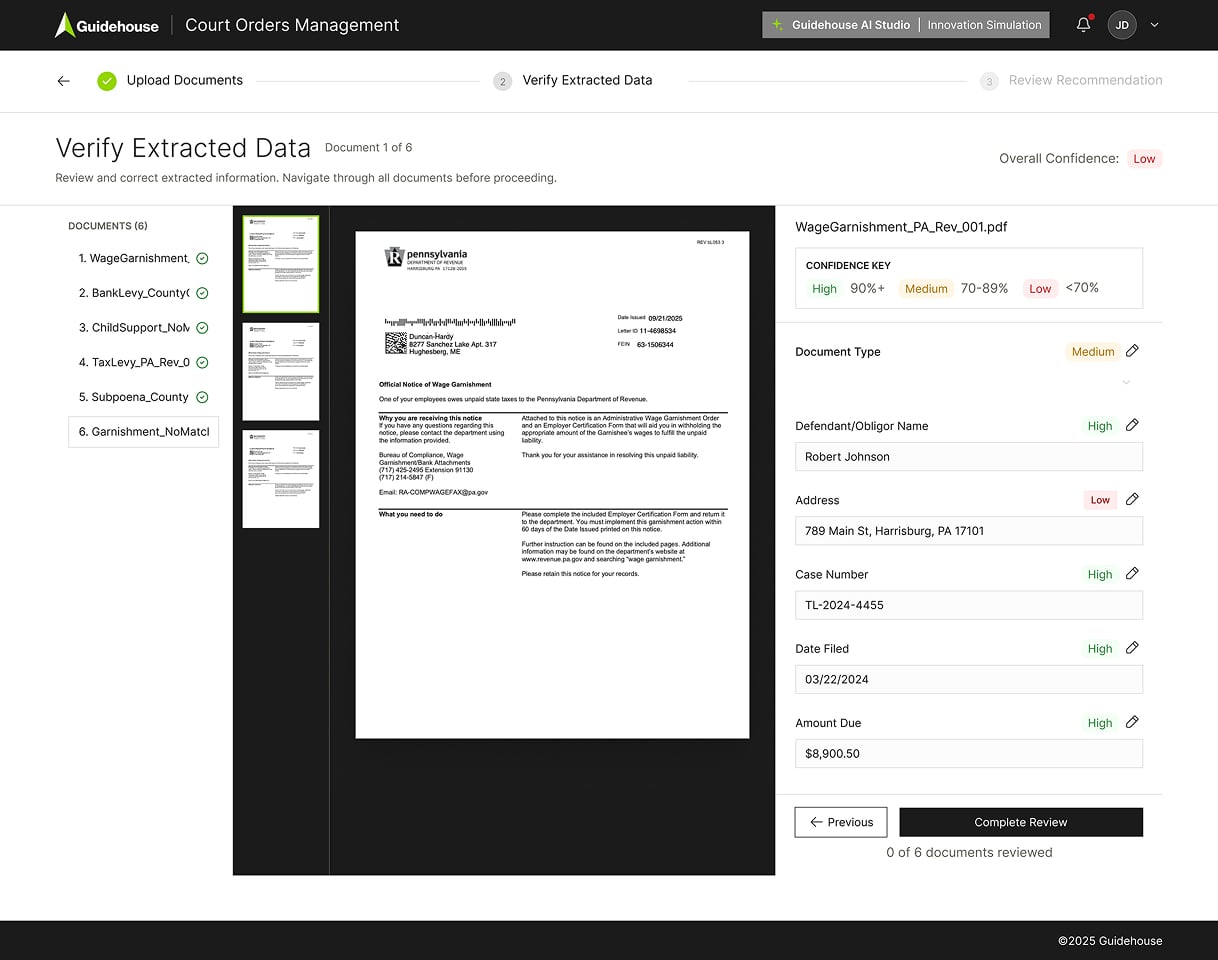

V1 — Edit Mode enabled: full inline field editing after AI extraction

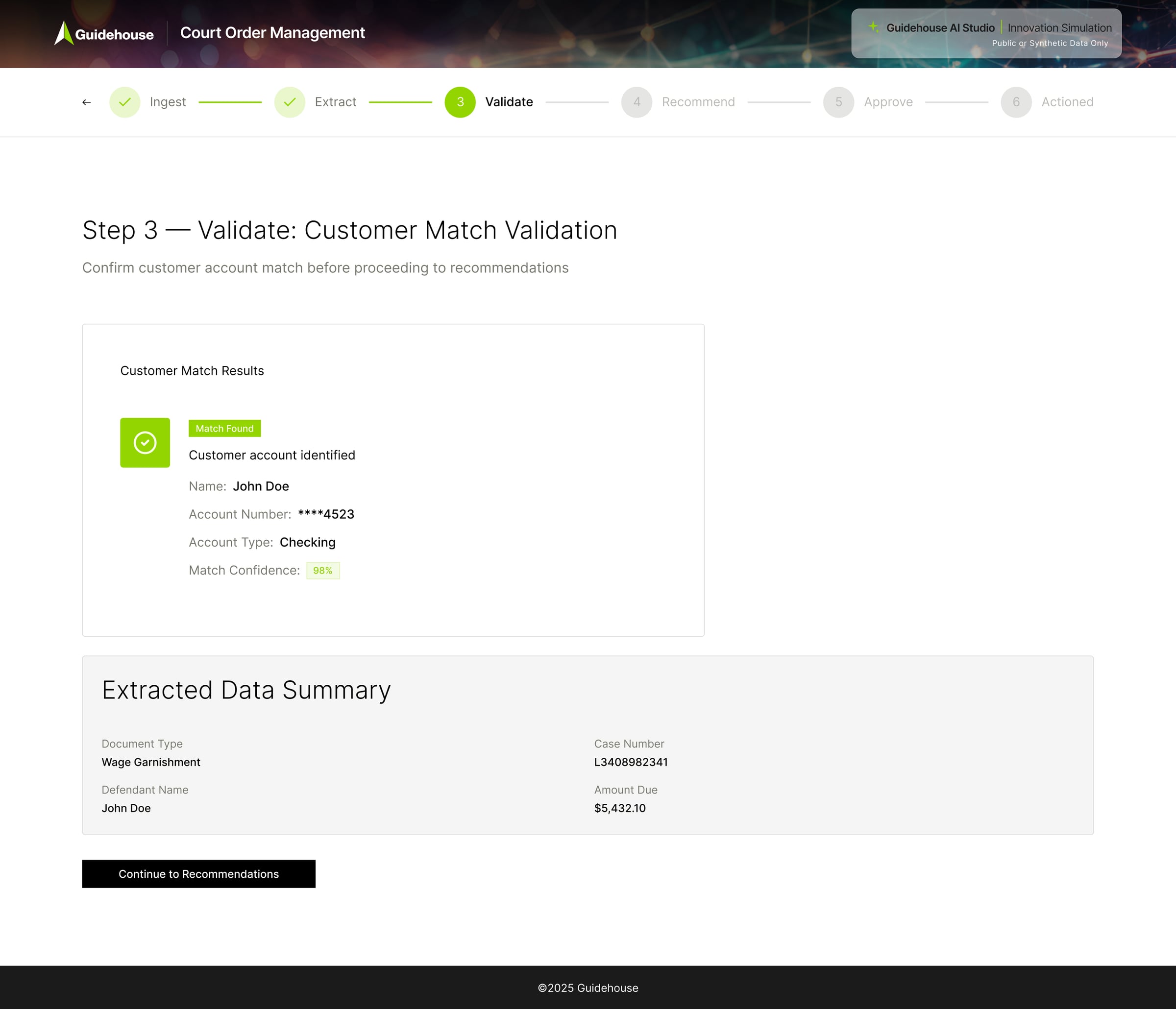

V1 — Customer Match Validation step with editable fields

Edit Mode removed

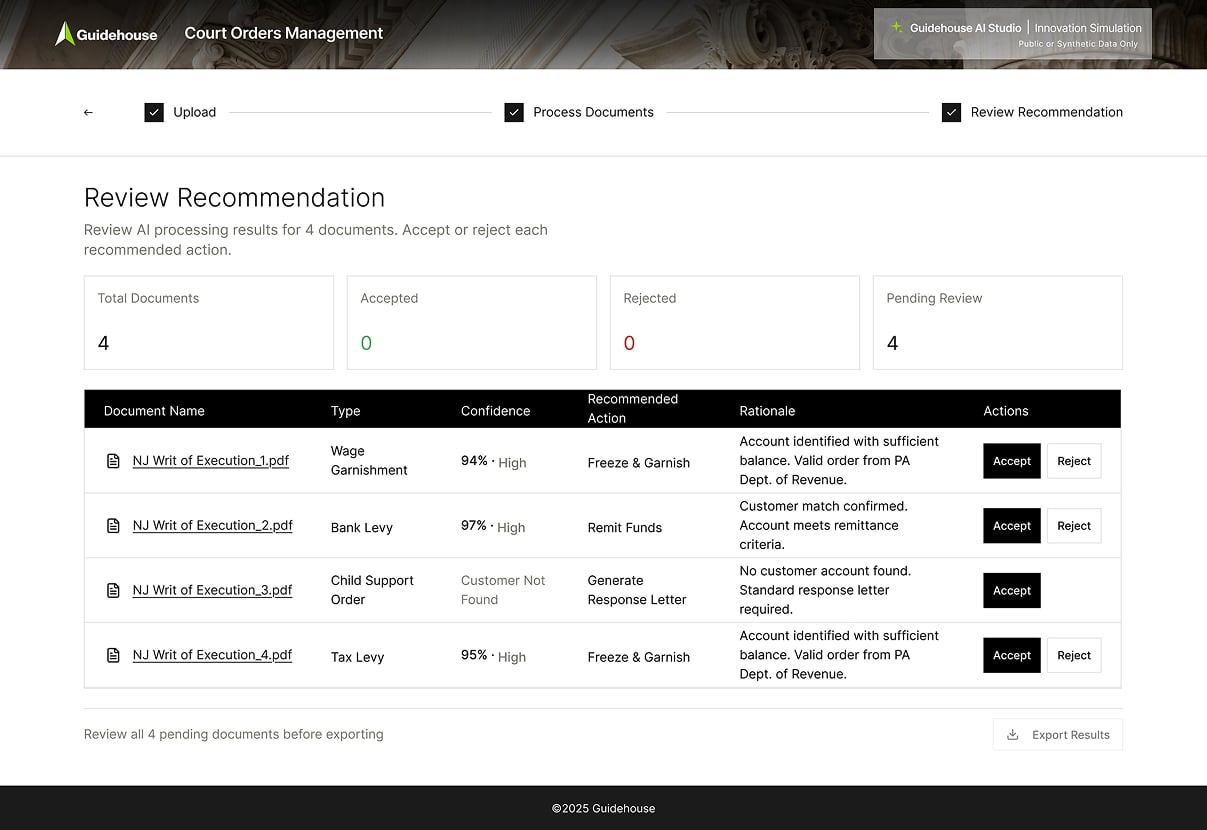

V2 — Edit Mode removed: bulk Review Recommendation table

Final decision

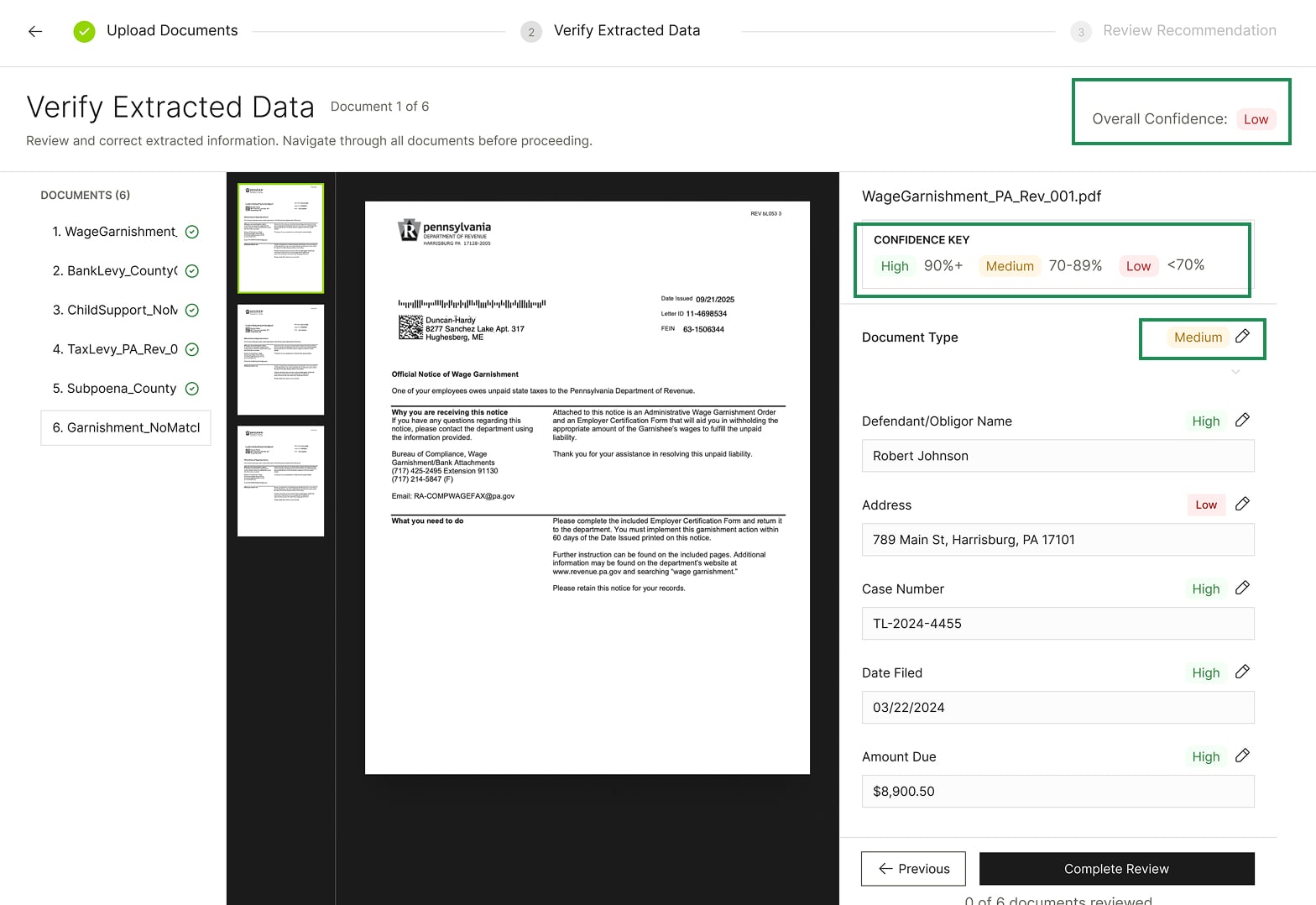

V3 — Final: per-field confidence scoring with structured decision states

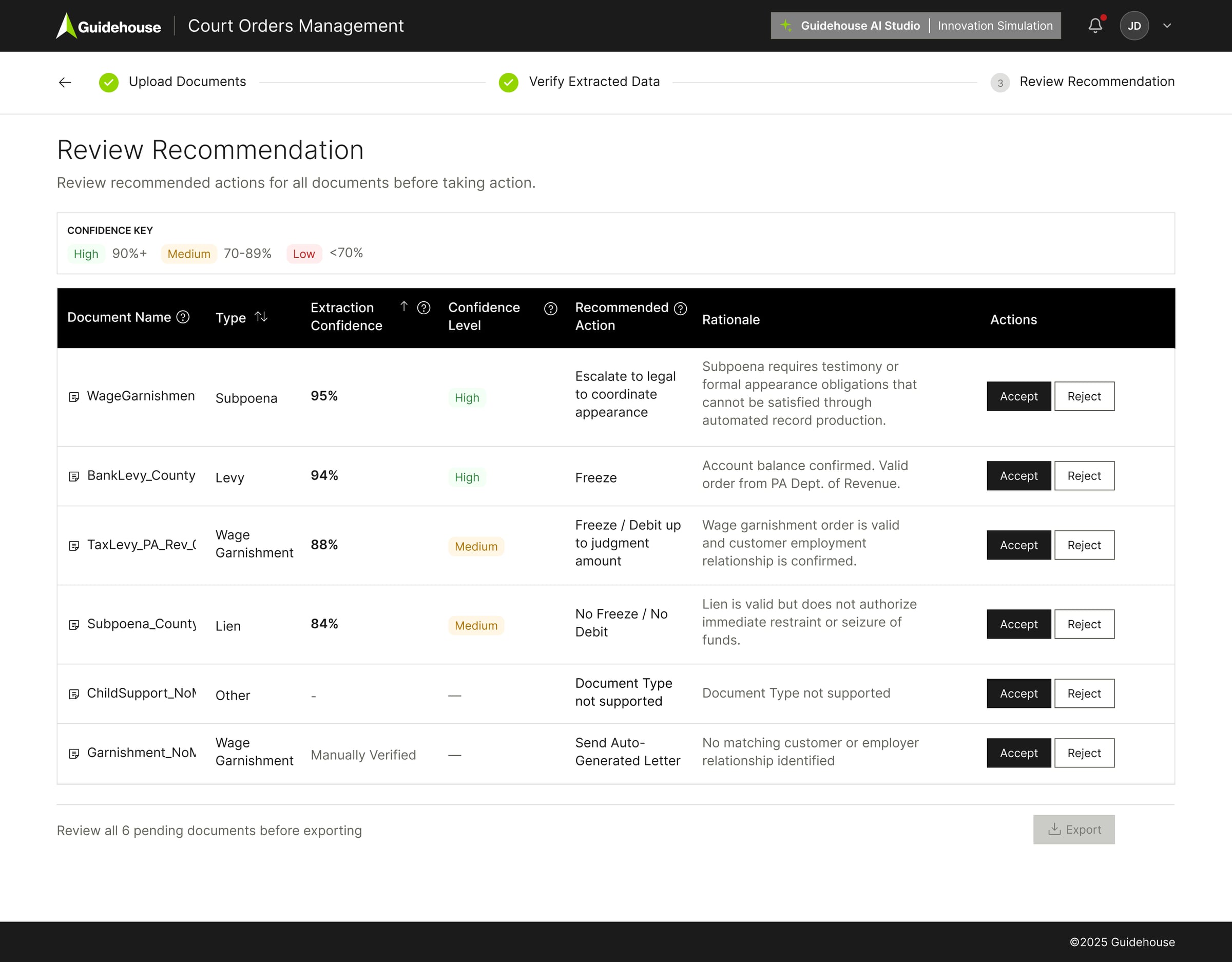

V3 — Final: Review Recommendation with Accept / Reject per document

Designing Trust in the Interface

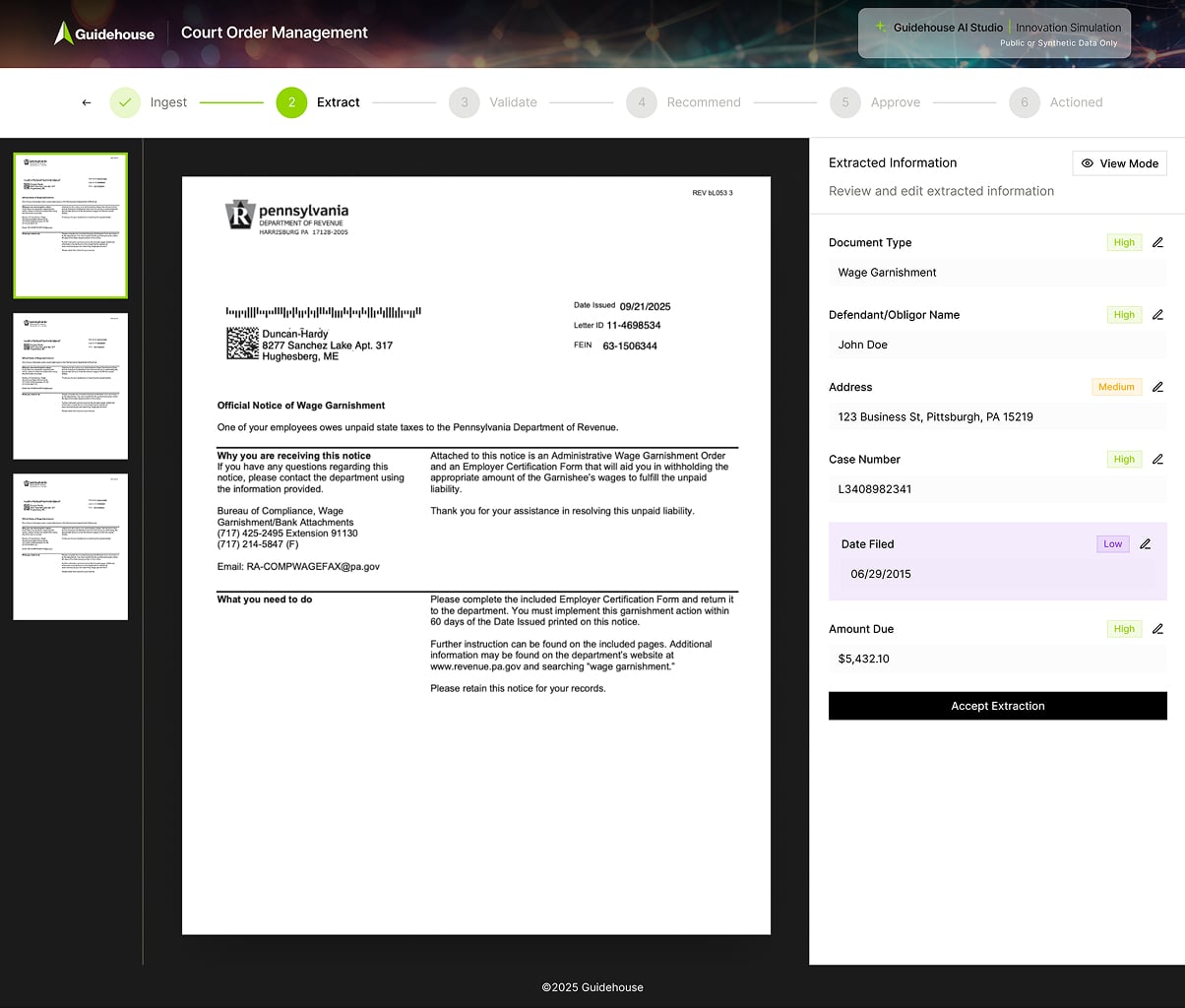

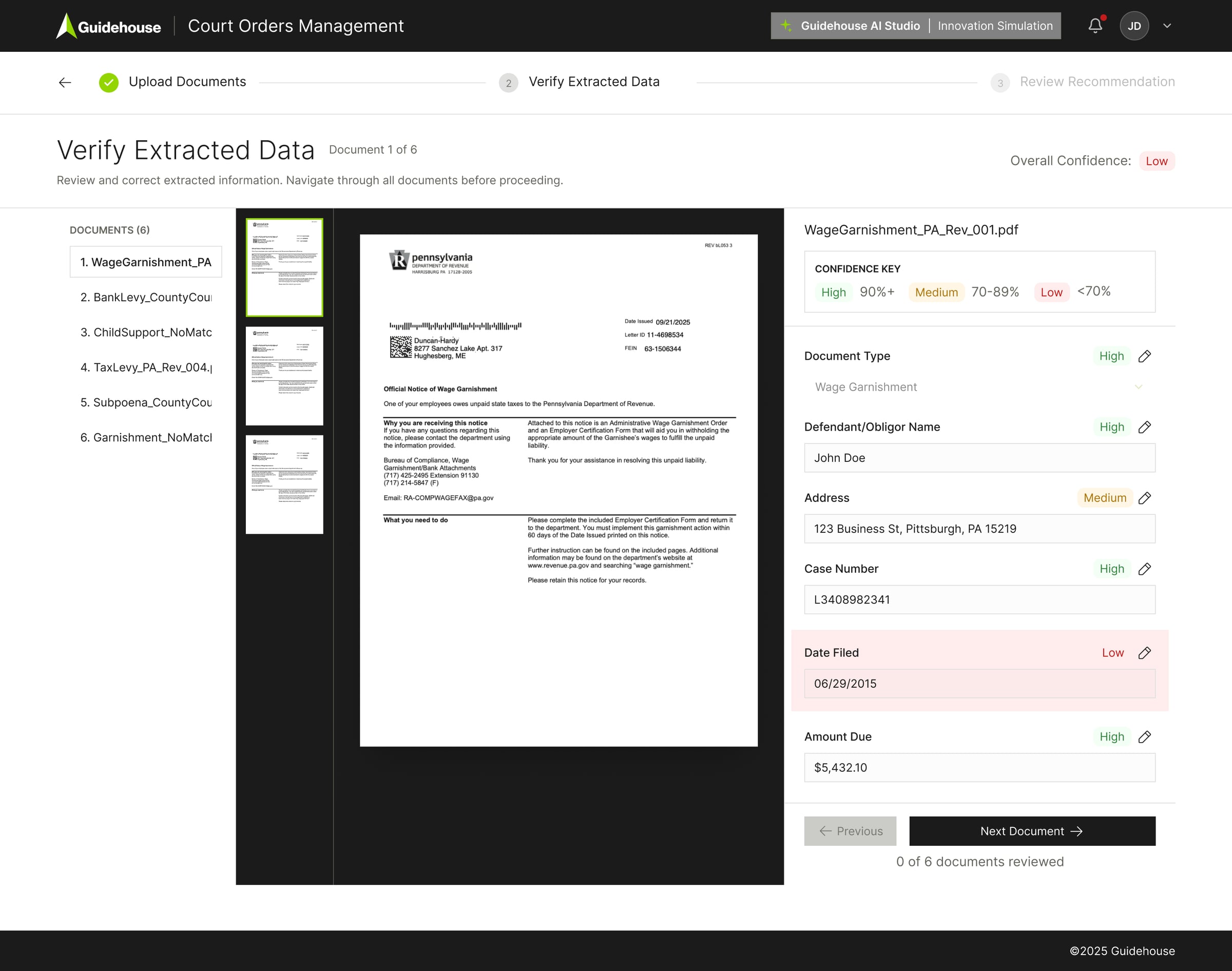

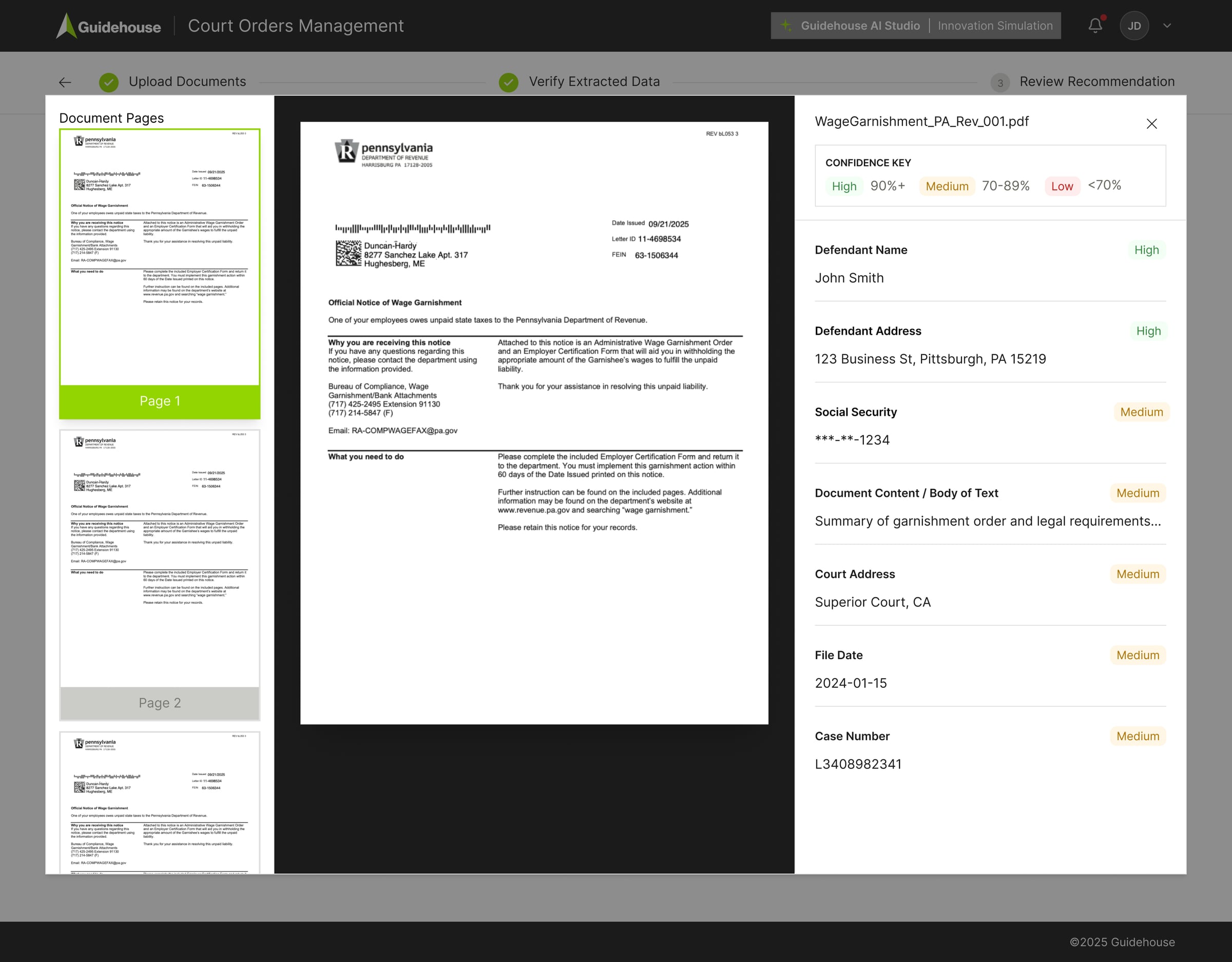

From Raw Confidence to Structured Decision States

In the POC, the model output was a single risk level at the top — Low, Medium, or High. Reviewers couldn't tell which fields drove that assessment or what required attention.

In the MVP, we broke that into field-level confidence states. Low-confidence fields were surfaced. Validation rules highlighted issues. Every override became traceable. Confidence stopped being a high-level signal. It became actionable guidance.

- Review required

- Suggested review

- Eligible

- Fully traceable

Each field shows: Confidence · Validation · Edit history · Override log

POC Review Screen

MVP Review Screen

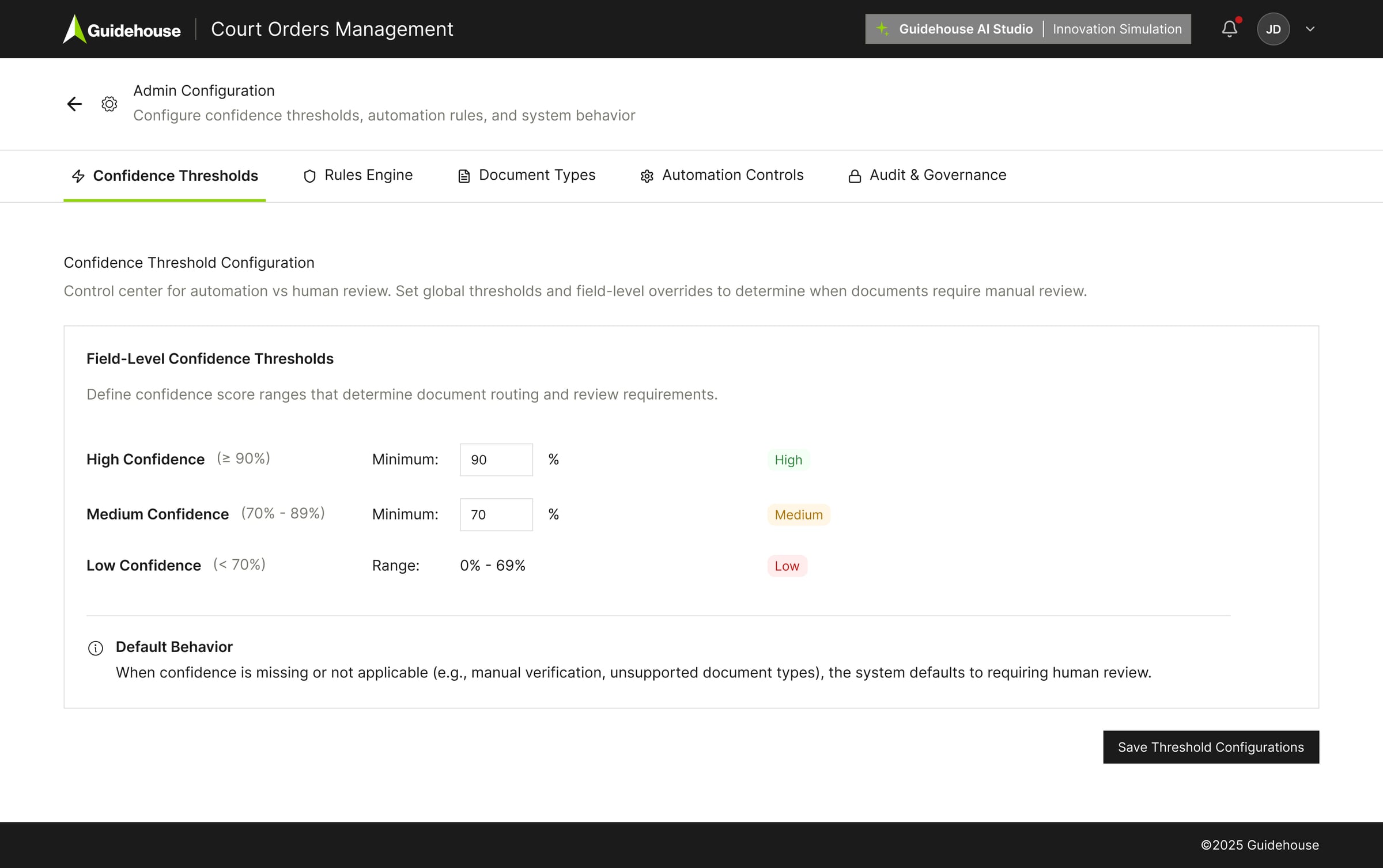

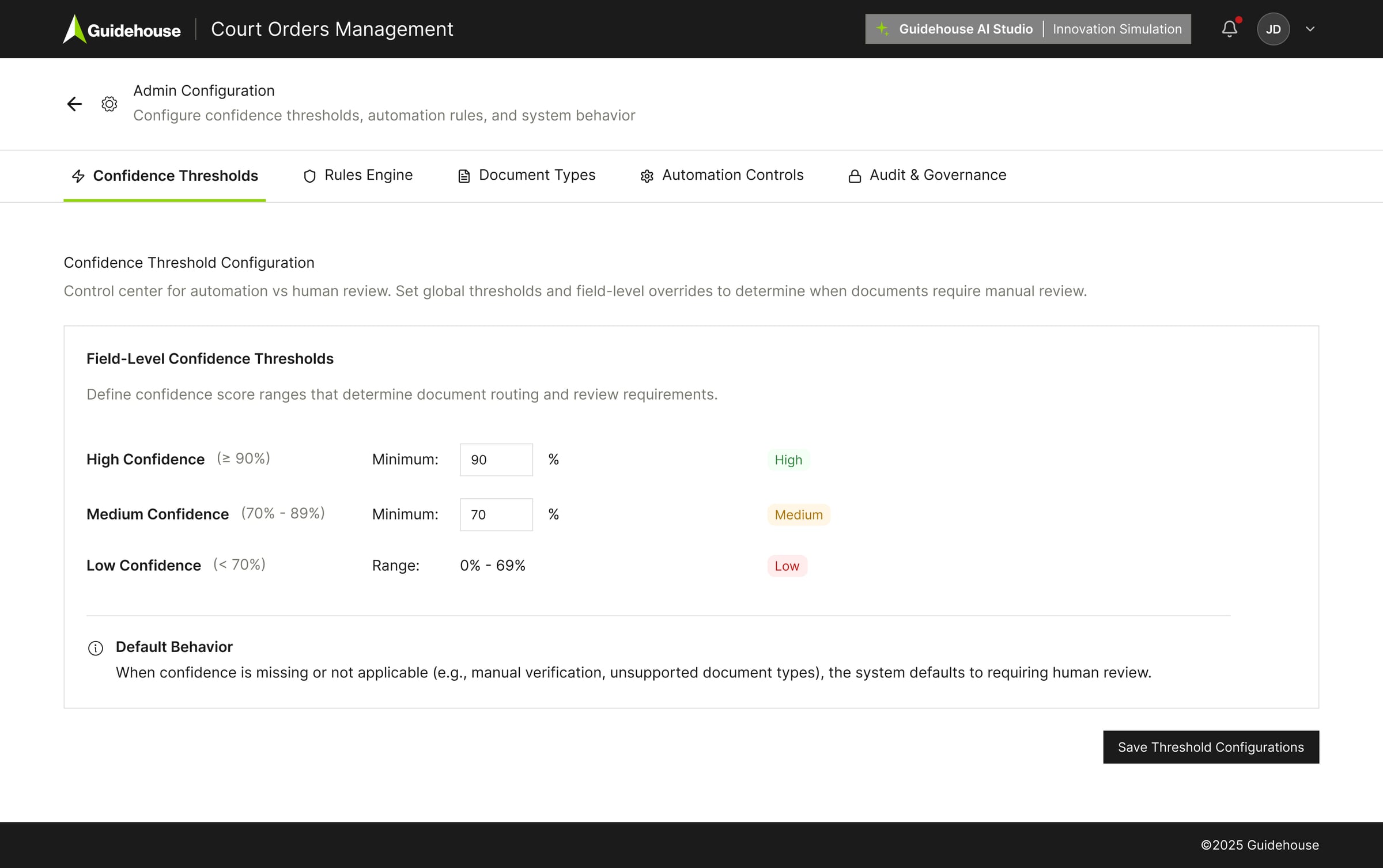

Admin Decision Framework

The layer that makes AI behavior predictable

We realized the admin layer wasn't configuration — it was the decision framework. The first layer converts raw AI confidence into structured review states: high, medium, low. This determines when human review is required and makes AI behavior predictable and auditable.

- Two-layer model explanation

- Confidence threshold configuration

- Non-technical admin control

Confidence Threshold Configuration: High ≥90% / Medium 70–89% / Low <70%

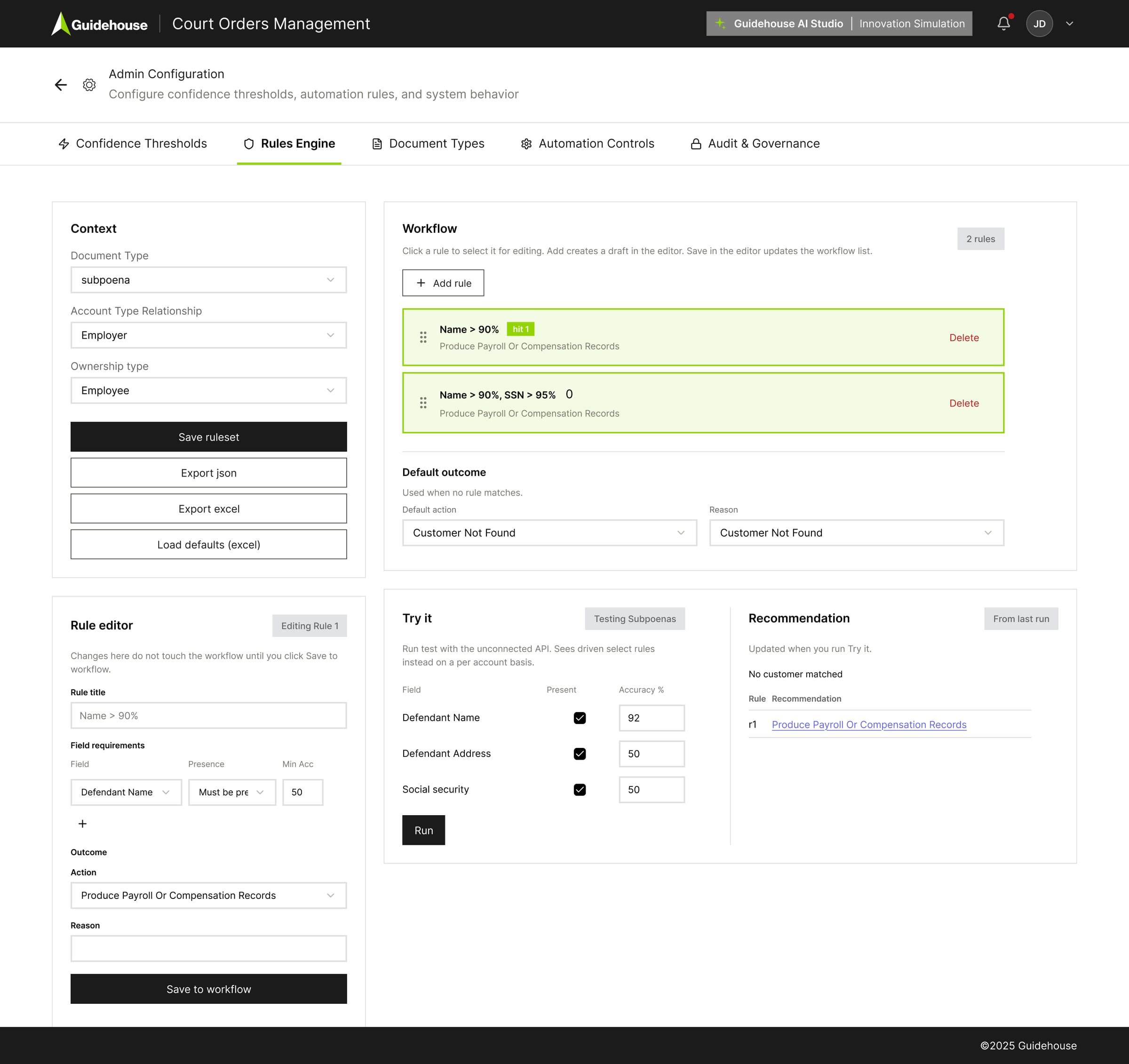

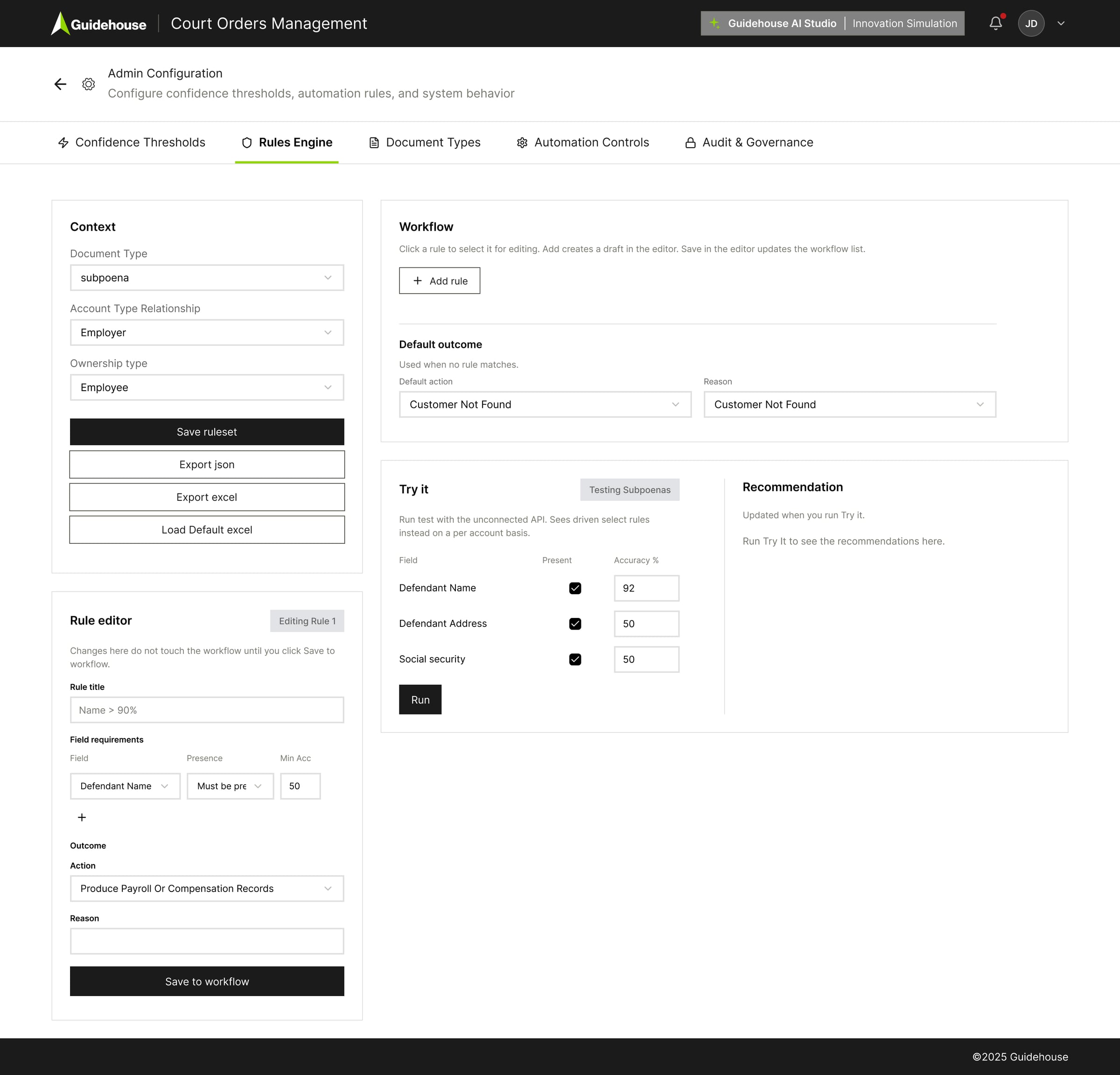

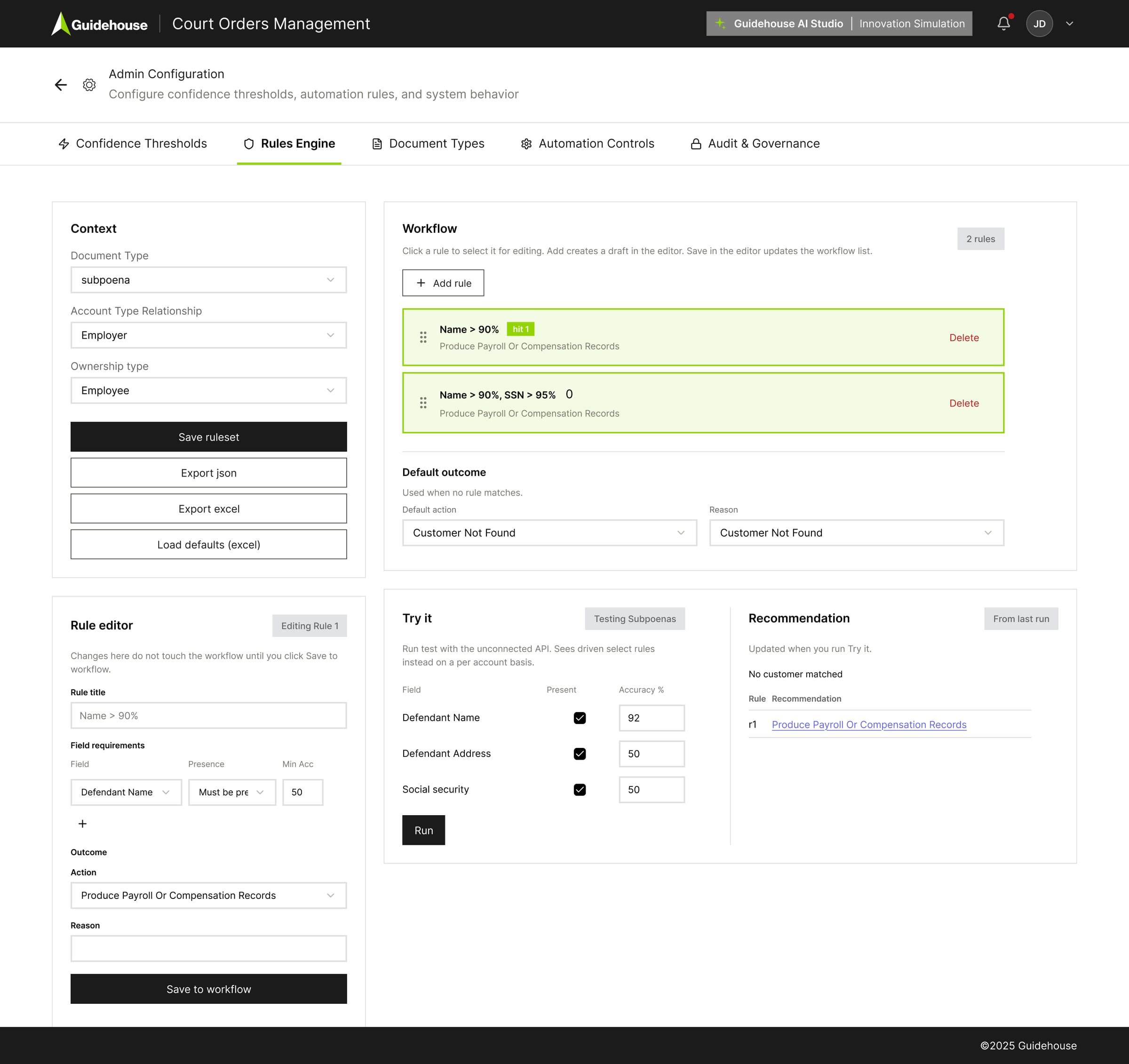

Rule Engine

Conditional logic without touching core code

The second admin layer translates extracted data and confidence thresholds into deterministic workflow actions.

- Rules are evaluated top to bottom — first match wins, with a clear fallback if nothing matches

- Supports jurisdiction-specific logic, document-type handling, and override behavior

- Configured without changing core system logic — making the platform scalable

Multi-rule stacking with live “Try it” test panel

The Solution

A structured, configurable MVP covering the full court order lifecycle — from document intake through compliant response.

Core workflow

- Okta SSO authentication

- Batch upload (up to 20 PDFs)

- AI classification and extraction with per-field confidence scoring

- Human-in-the-loop verification and customer account matching

- AI-generated recommended actions with client approval workflow

- Compliance-ready response letter generation

Admin panel

- Configurable confidence thresholds

- Rules engine for conditional routing

- Document type management

- Automation controls

- Audit and governance tooling

Final Product — Screen Tour

Final State

Signed Off by PO and Compliance

I'm not showing this to highlight infrastructure. I'm showing it because this is the moment the workflow became contractually defined. Every decision state, routing rule, and override path we designed in the UI is reflected here in system logic. This is where design translated into operational architecture.

- Clarity

- Predictability

- Audit visibility

- Operational confidence

“What started as an AI extraction prototype evolved into a configurable decision workflow. We structured extraction. We formalized validation. We made human approval explicit. And we closed the loop with feedback and traceability. The goal wasn’t full automation — it was balancing probabilistic AI with human accountability. The result is a reusable framework for AI-driven decisioning, designed for clarity, governance, and scale.”

Final workflow diagrams signed off by PO

What this project delivered

- MVP signed off by Product Owner and compliance stakeholders

- Transformed raw AI risk tags into structured, auditable decision states

- Introduced per-field confidence scoring replacing single-document risk labels

- Designed configurable rules engine enabling non-technical admin control

- Delivered full audit trail: confidence, validation, edit history, override log per field

Reflection

Design for high-stakes AI systems

This project was less about AI capability and more about what happens after the AI makes a decision. The model can extract and classify — but unless the reviewer understands why to trust it, the workflow breaks down. Designing trust isn't a UX polish problem. It's an architecture problem.

The constraints sharpened the thinking: no direct user access, an inherited prototype, and a compliance context that punished ambiguity. Every design decision — confidence thresholds, override logs, the rules engine — was made in service of one outcome: a human who could act with confidence and account for every step.